In 1998, I unintentionally created a racially biased artificial intelligence algorithm. There are lessons in that story that resonate even more strongly today.

The dangers of bias and errors in AI algorithms are now well known. Why, then, has there been a flurry of blunders by tech companies in recent months, especially in the world of AI chatbots and image generators? Initial versions of ChatGPT produced racist output. The DALL-E 2 and Stable Diffusion image generators both showed racial bias in the pictures they created.

My own epiphany as a white male computer scientist occurred while teaching a computer science class in 2021. The class had just viewed a video poem by Joy Buolamwini, AI researcher and artist and the self-described poet of code. Her 2019 video poem “AI, Ain’t I a Woman?” is a devastating three-minute exposé of racial and gender biases in automatic face recognition systems – systems developed by tech companies like Google and Microsoft.

The systems often fail on women of color, incorrectly labeling them as male. Some of the failures are particularly egregious: The hair of Black civil rights leader Ida B. Wells is labeled as a “coonskin cap”; another Black woman is labeled as possessing a “walrus mustache.”

Echoing through the years

I had a horrible déjà vu moment in that computer science class: I suddenly remembered that I, too, had once created a racially biased algorithm. In 1998, I was a doctoral student. My project involved tracking the movements of a person’s head based on input from a video camera. My doctoral adviser had already developed mathematical techniques for accurately following the head in certain situations, but the system needed to be much faster and more robust. Earlier in the 1990s, researchers in other labs had shown that skin-colored areas of an image could be extracted in real time. So we decided to focus on skin color as an additional cue for the tracker.

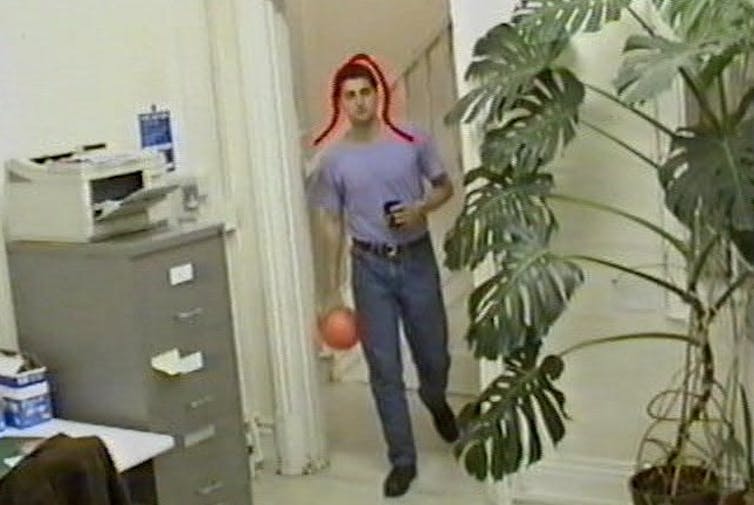

The author’s 1998 head-tracking algorithm used skin color to distinguish a face from the background of an image.

Source: John MacCormick, CC BY-ND

I used a digital camera – still a rarity at that time – to take a few shots of my own hand and face, and I also snapped the hands and faces of two or three other people who happened to be in the building. It was easy to manually extract some of the skin-colored pixels from these images and construct a statistical model for the skin colors. After some tweaking and debugging, we had a surprisingly robust real-time head-tracking system.

Not long afterward, my adviser asked me to demonstrate the system to some visiting company executives. When they walked into the room, I was instantly flooded with anxiety: the executives were Japanese. In my casual experiment to see if a simple statistical model would work with our prototype, I had collected data from myself and a handful of others who happened to be in the building. But 100% of…