A recently developed electronic tongue is capable of identifying differences in similar liquids, such as milk with varying water content; diverse products, including soda types and coffee blends; signs of spoilage in fruit juices; and instances of food safety concerns.

The team, led by researchers at Penn State, also found that results were even more accurate when artificial intelligence (AI) used its own assessment parameters to interpret the data generated by the electronic tongue.

The researchers published their results Oct. 9 in Nature.

According to the researchers, the electronic tongue can be useful for food safety and production, as well as for medical diagnostics. The sensor and its AI can broadly detect and classify various substances while collectively assessing their respective quality, authenticity and freshness. This assessment has also provided the researchers with a view into how AI makes decisions, which could lead to better AI development and applications, they said.

“We’re trying to make an artificial tongue, but the process of how we experience different foods involves more than just the tongue,” said corresponding author Saptarshi Das, Ackley Professor of Engineering and professor of engineering science and mechanics. “We have the tongue itself, consisting of taste receptors that interact with food species and send their information to the gustatory cortex—a biological neural network.”

The gustatory cortex is the region of the brain that perceives and interprets various tastes beyond what can be sensed by taste receptors, which primarily categorize foods via the five broad categories of sweet, sour, bitter, salty and savory. As the brain learns the nuances of the tastes, it can better differentiate the subtlety of flavors. To artificially imitate the gustatory cortex, the researchers developed a neural network, which is a machine learning algorithm that mimics the human brain in assessing and understanding data.

“Previously, we investigated how the brain reacts to different tastes and mimicked this process by integrating different 2D materials to develop a kind of blueprint as to how AI can process information more like a human being,” said co-author Harikrishnan Ravichandran, a doctoral student in engineering science and mechanics advised by Das.

“Now, in this work, we’re considering several chemicals to see if the sensors can accurately detect them, and furthermore, whether they can detect minute differences between similar foods and discern instances of food safety concerns.”

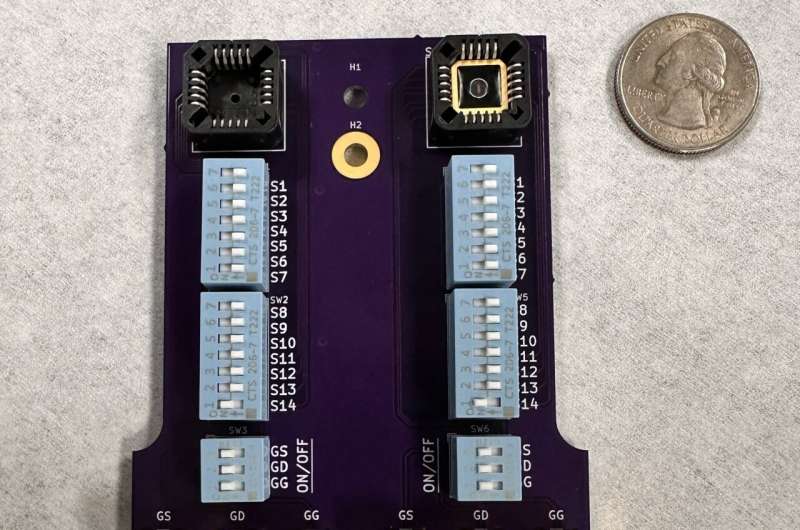

The tongue comprises a graphene-based ion-sensitive field-effect transistor, or a conductive device that can detect chemical ions, linked to an artificial neural network, trained on various datasets. Critically, Das noted, the sensors are non-functionalized, meaning that one sensor can detect different types of chemicals, rather than having a specific sensor dedicated to each potential chemical. The researchers provided the neural network with 20 specific parameters to assess, all of which are related to how a sample liquid interacts with the sensor’s electrical properties.

Based on these researcher-specified parameters, the AI could accurately detect samples—including watered-down milks, different types of sodas, blends of coffee and multiple fruit juices at several levels of freshness—and report on their content with greater than 80% accuracy in about a minute.

“After achieving a reasonable accuracy with human-selected parameters, we decided to let the neural network define its own figures of merit by providing it with the raw sensor data. We found that the neural network reached a near ideal inference accuracy of more than 95% when utilizing the machine-derived figures of merit rather than the ones provided by humans,” said co-author Andrew Pannone, a doctoral student in engineering science and mechanics advised by Das.

“So, we used a method called Shapley additive explanations, which allows us to ask the neural network what it was thinking after it makes a decision.”

The electronic tongue comprises a graphene-based ion-sensitive field-effect transistor, or a conductive device that can detect chemical ions, linked to an artificial neural network, trained on various datasets. This is located in the top right of the device. © Saptarshi Das Lab/Penn State

This approach uses game theory, a decision-making process that considers the choices of others to predict the outcome of a single participant, to assign values to the data under consideration. With these explanations, the researchers could reverse engineer an understanding of how the neural network weighed various components of the sample to make a final determination—giving the team a glimpse into the neural network’s decision-making process, which has remained largely opaque in the field of AI, according to the researchers.

They found that, instead of simply assessing individual human-assigned parameters, the neural network considered the data it determined were most important together, with the Shapley additive explanations revealing how important the neural network considered each input data.

The researchers explained that this assessment could be compared to two people drinking milk. They can both identify that it is milk, but one person may think it is skim that has gone off while the other thinks it is 2% that is still fresh. The nuances of why are not easily explained even by the individual making the assessment.

“We found that the network looked at more subtle characteristics in the data—things we, as humans, struggle to define properly,” Das said.

“And because the neural network considers the sensor characteristics holistically, it mitigates variations that might occur day-to-day. In terms of the milk, the neural network can determine the varying water content of the milk and, in that context, determine if any indicators of degradation are meaningful enough to be considered a food safety issue.”

According to Das, the tongue’s capabilities are limited only by the data on which it is trained, meaning that while the focus of this study was on food assessment, it could be applied to medical diagnostics, too. And while sensitivity is important no matter where the sensor is applied, their sensors’ robustness provides a path forward for broad deployment in different industries, the researchers said.

Das explained that the sensors don’t need to be precisely identical because machine learning algorithms can look at all information together and still produce the right answer. This makes for a more practical—and less expensive—manufacturing process.

“We figured out that we can live with imperfection,” Das said. “And that’s what nature is—it’s full of imperfections, but it can still make robust decisions, just like our electronic tongue.”

More information:

Andrew Pannone et al, Robust chemical analysis with graphene chemosensors and machine learning, Nature (2024). DOI: 10.1038/s41586-024-08003-w

Provided by

Pennsylvania State University

Citation:

An electronic tongue that detects subtle differences in liquids also provides a view into how AI makes decisions (2024, October 9)