Catastrophic forgetting, an innate issue with backpropagation learning algorithms, is a challenging problem in artificial and spiking neural network (ANN and SNN) research.

The brain has somewhat solved this problem using multiscale plasticity. Under global regulation through specific pathways, neuromodulators are dispersed to target brain regions, where both synaptic and neuronal plasticity are modulated by neuromodulators locally. Specifically, neuromodulators modify the capacity and property of neuronal and synaptic plasticity. This modification is known as metaplasticity.

Researchers led by Prof. Xu Bo from the Institute of Automation of the Chinese Academy of Sciences and their collaborators have proposed a novel brain-inspired learning method (NACA) based on neural modulation dependent plasticity, which can help mitigate catastrophic forgetting in ANN and SNN. The study was published in Science Advances on Aug. 25.

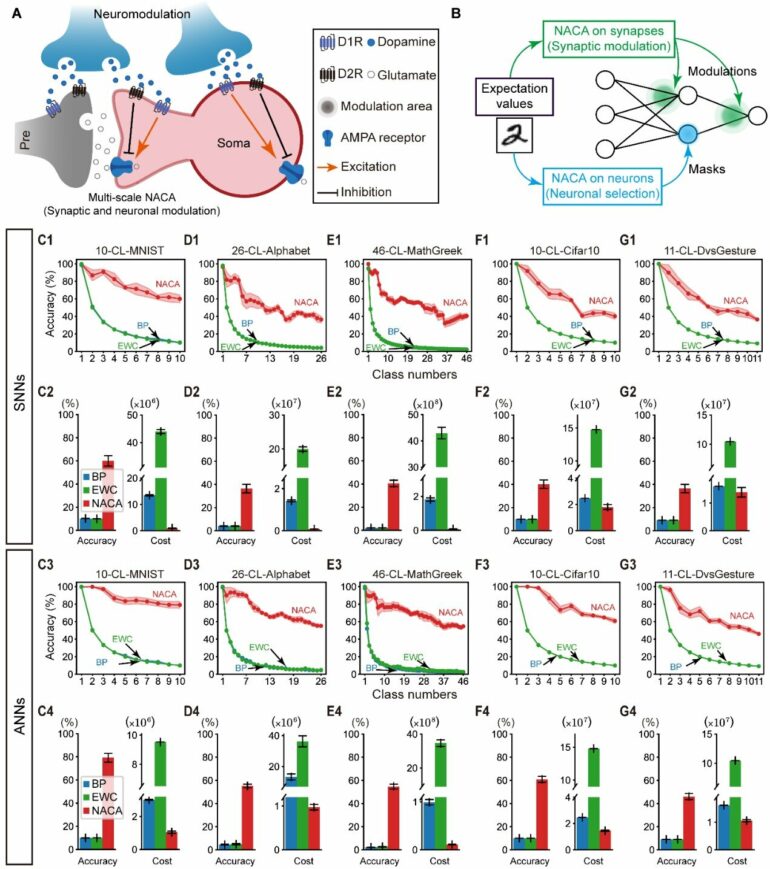

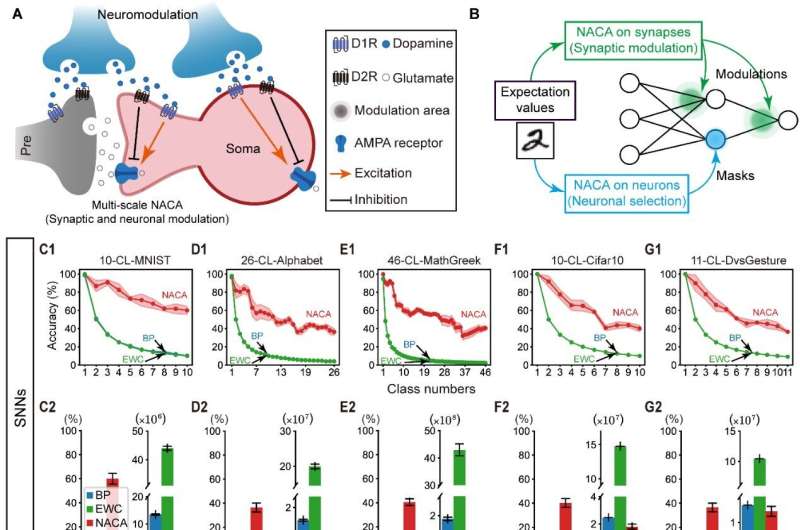

This method is based on the structure of the complex neural modulation pathway in the brain and relies on a mathematical model of the neural modulation pathway in the form of expected matrix encoding. After receiving the stimulus signal, dopamine supervisory signals of different strength are generated, which further affect the local synaptic and neuronal plasticity.

NACA in class-continual learning task. (A, B) Neuromodulation on both local neuronal plasticity and synaptic plasticity. (C-G) Performance of NACA compared with EWC and BP. © CASIA

NACA supports the use of pure feed forward flow learning methods to train both ANNs and SNNs. Through global dopamine diffusion support, it synchronizes with the input signal and even propagates forward information in advance of the input signal. Coupled with selective adjustment of the spike-timing-dependent plasticity, NACA exhibits significant advantages in fast convergence and mitigation of catastrophic forgetting.

In two typical image and speech pattern recognition tasks, the research team evaluated the NACA algorithm’s accuracy and computational cost. In tests using the standard datasets of image classification (MNIST) and speech recognition (TIDigits), NACA achieved higher classification accuracy (approximately 1.92%) and lower learning energy consumption (approximately 98%).

Moreover, the research team focused on testing the continuous learning ability of NACA on class continuous learning, and extended the neural modulation to the range of neuronal plasticity.

In the five major continuous learning tasks from different categories (including continuous MNIST handwritten numbers, continuous alphabet handwritten letters, continuous MathGreek handwritten mathematical symbols, continuous Cifar-10 natural images, and continuous DvsGesture dynamic gestures), NACA showed lower energy consumption compared to backpropagation and elastic weight consolidation algorithms and could greatly mitigate catastrophic forgetting problems.

“NACA is a biologically-plausible global optimization algorithm that uses macroscopic plasticity to further ‘modulate’ local plasticity, which can be seen as a ‘plasticity of plasticity’ method with intuitive functional consistency with ‘learn to learn’ and ‘meta learning,'” said Prof. Xu.

More information:

Tielin Zhang et al, A brain-inspired algorithm that mitigates catastrophic forgetting of artificial and spiking neural networks with low computational cost, Science Advances (2023). DOI: 10.1126/sciadv.adi2947

Provided by

Chinese Academy of Sciences

Citation:

Brain-inspired learning algorithm realizes metaplasticity in artificial and spiking neural networks (2023, September 1)