Caltech neuroscientists are making promising progress toward showing that a device known as a brain–machine interface (BMI), which they developed to implant into the brains of patients who have lost the ability to speak, could one day help all such patients communicate by simply thinking and not speaking or miming.

In 2022, the team reported that their BMI had been successfully implanted and used by a patient to communicate unspoken words. Now, reporting in the journal Nature Human Behaviour, the scientists have shown that the BMI has worked successfully in a second human patient.

“We are very enthusiastic about these new findings,” says Richard Andersen, the James G. Boswell Professor of Neuroscience and director and leadership chair of the Tianqiao and Chrissy Chen Brain–Machine Interface Center at Caltech, who described the earlier research in a recent public lecture at Caltech. “We reproduced the results in a second individual, which means that this is not dependent on the particulars of one person’s brain or where exactly their implant landed. This is indeed more likely to hold up in the larger population.”

BMIs are being developed and tested to help patients in a number of ways. For example, some work has focused on developing BMIs that can control robotic arms or hands. Other groups have had success at predicting participants’ speech by analyzing brain signals recorded from motor areas when a participant whispered or mimed words.

But predicting what somebody is thinking—detecting their internal dialogue—is much more difficult, as it does not involve any movement, explains Sarah Wandelt, Ph.D., lead author on the new paper, who is now a neural engineer at the Feinstein Institutes for Medical Research in Manhasset, New York.

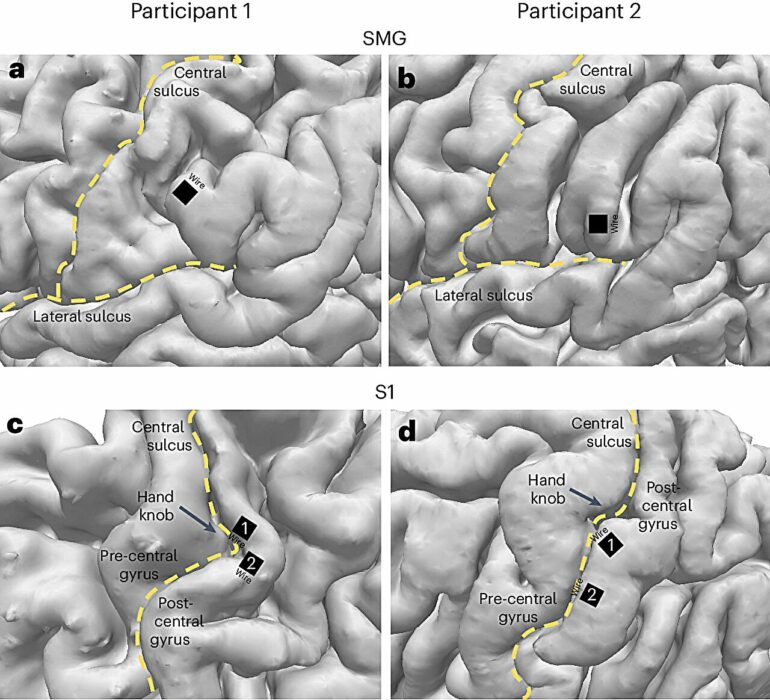

The new research is the most accurate yet at predicting internal words. In this case, brain signals were recorded from single neurons in a brain area called the supramarginal gyrus located in the posterior parietal cortex (PPC). The researchers had found in a previous study that this brain area represents spoken words.

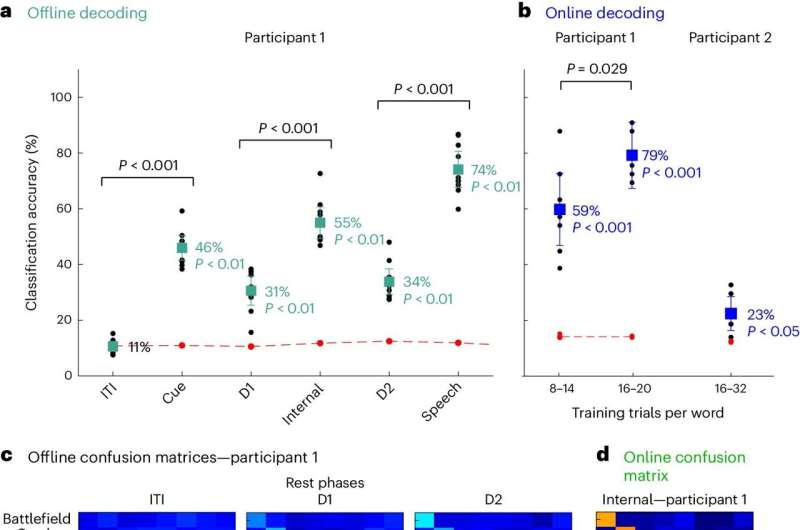

In the current study, the researchers first trained the BMI device to recognize the brain patterns produced when certain words were spoken internally, or thought, by two tetraplegic participants. This training period took only about 15 minutes. The researchers then flashed a word on a screen and asked the participant to “say” the word internally. The results showed that the BMI algorithms were able to predict the eight words tested, including two nonsensical words, with an average of 79% and 23% accuracy for the two participants, respectively.

Words can be significantly decoded during internal speech in the SMG. © Nature Human Behaviour (2024). DOI: 10.1038/s41562-024-01867-y

“Since we were able to find these signals in this particular brain region, the PPC, in a second participant, we can now be sure that this area contains these speech signals,” says David Bjanes, a postdoctoral scholar research associate in biology and biological engineering and an author of the new paper. “The PPC encodes a large variety of different task variables. You could imagine that some words could be tied to other variables in the brain for one person. The likelihood of that being true for two people is much, much lower.”

The work is still preliminary but could help patients with brain injuries, paralysis, or diseases, such as amyotrophic lateral sclerosis (ALS), that affect speech. “Neurological disorders can lead to complete paralysis of voluntary muscles, resulting in patients being unable to speak or move, but they are still able to think and reason. For that population, an internal speech BMI would be incredibly helpful,” Wandelt says.

The researchers point out that the BMIs cannot be used to read people’s minds; the device would need to be trained in each person’s brain separately, and they only work when a person focuses on the particular word.

Additional authors on the paper, “Representation of internal speech by single neurons in human supramarginal gyrus,” include Kelsie Pejsa, a lab and clinical studies manager at Caltech, and Brian Lee and Charles Liu, both visiting associates in biology and biological engineering from the Keck School of Medicine of USC. Bjanes and Liu are also affiliated with the Rancho Los Amigos National Rehabilitation Center in Downey, California.

More information:

Sarah K. Wandelt et al, Representation of internal speech by single neurons in human supramarginal gyrus, Nature Human Behaviour (2024). DOI: 10.1038/s41562-024-01867-y

Provided by

California Institute of Technology

Citation:

Brain-machine interface device predicts internal speech in second patient (2024, May 15)