Language enables people to transmit thoughts to each other because each person’s brain responds similarly to the meaning of words. In our newly published research, my colleagues and I developed a framework to model the brain activity of speakers as they engaged in face-to-face conversations.

We recorded the electrical activity of two people’s brains as they engaged in unscripted conversations. Previous research has shown that when two people converse, their brain activity becomes coupled, or aligned, and that the degree of neural coupling is associated with better understanding of the speaker’s message.

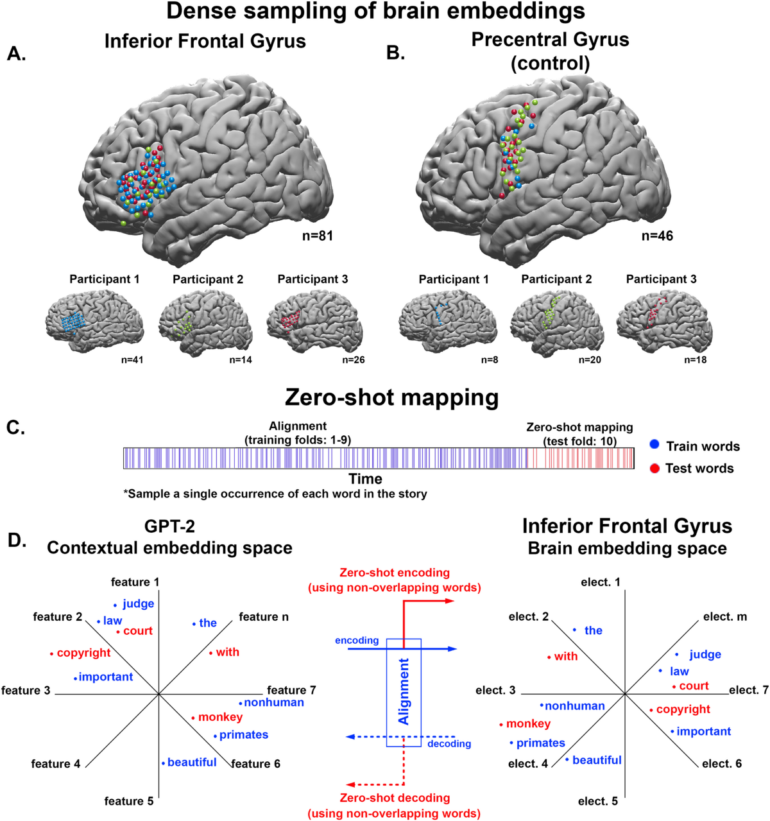

A neural code refers to particular patterns of brain activity associated with distinct words in their contexts. We found that the speakers’ brains are aligned on a shared neural code. Importantly, the brain’s neural code resembled the artificial neural code of large language models, or LLMs.

The neural patterns of words

A large language model is a machine learning program that can generate text by predicting what words most likely follow others. Large language models excel at learning the structure of language, generating humanlike text and holding conversations. They can even pass the Turing test, making it difficult for someone to discern whether they are interacting with a machine or a human. Like humans, LLMs learn how to speak by reading or listening to text produced by other humans.

By giving the LLM a transcript of the conversation, we were able to extract its “neural activations,” or how it translates words into numbers, as it “reads” the script. Then, we correlated the speaker’s brain activity with both the LLM’s activations and with the listener’s brain activity. We found that the LLM’s activations could predict the speaker and listener’s shared brain activity.

To be able to understand each other, people have a shared agreement on the grammatical rules and the meaning of words in context. For instance, we know to use the past tense form of a verb to talk about past actions, as in the sentence: “He visited the museum yesterday.” Additionally, we intuitively understand that the same word can have different meanings in different situations. For instance, the word cold in the sentence “you are cold as ice” can refer either to one’s body temperature or personality trait, depending on the context. Due to the complexity and richness of natural language, until the recent success of large language models, we lacked a precise mathematical model to describe it.

Similar patterns of brain activity emerge in a speaker before saying a word and in a listener after hearing it.

Maskot via Getty Images

Our study found that large language models can predict how linguistic information is encoded in the human brain, providing a new tool to interpret human brain activity. The similarity between the human brain’s and the large language model’s linguistic code has…