The real world possesses many challenges for AI missions. One of those challenges is the requirement that machines will be able to recognize and quickly learn new objects which they have not seen before. An AI robust to changes will be of great use in quickly adapting to a dynamic reality, be it a robot recognizing new products at a grocery store or a self-driving car interacting with new road signs or objects around it.

Bar-Ilan University researchers have uncovered a new universal law detailing how artificial neural networks handle an increasing number of categories for identification. This law demonstrates how the identification error rate of such networks increases with the number of required recognizable objects.

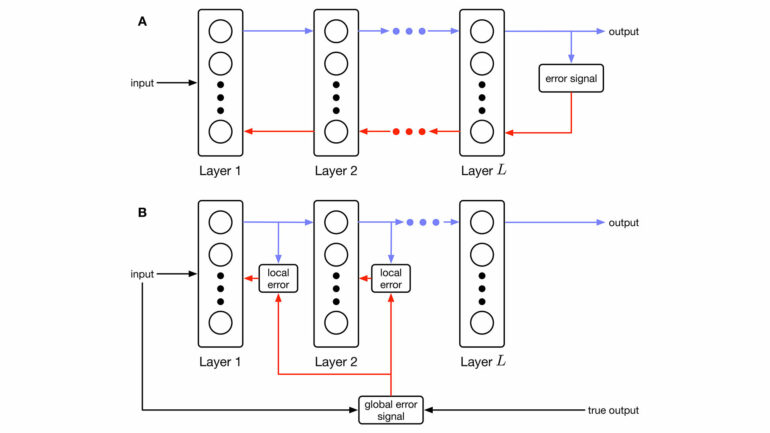

This law was found to govern both shallow and deep neural network architectures, indicating that shallow networks, similar to that of the brain, can imitate the functionality of deeper ones. A shallow wide architecture can perform as well as a deep narrow one, just as a wide, low-rise building can house the same number of inhabitants as a narrow skyscraper.

The new law, known as scaling law, was revealed in a study published today in Physica A: Statistical Mechanics and its Applications by a team of researchers led by Prof. Ido Kanter from Bar-Ilan University’s Department of Physics and Gonda (Goldschmied) Multidisciplinary Brain Research Center.

Ella Koresh, an undergraduate student and a key contributor to the research, highlights the practical implications of this finding. “This is a significant advancement because one of the most critical aspects of deep learning is latency—the time it takes for the network to process and identify an object. As networks become deeper latency increases, leading to delays in the model’s response, while shallow brain-inspired networks have lower latency and faster response,” she explains.

Reducing the latency of AI systems holds profound implications on real-time decision-making processes. In addition, the scaling law introduced in this research is vital for learning scenarios where the number of labels is dynamic.

More information:

Ella Koresh et al, Scaling in Deep and Shallow Learning Architectures, Physica A: Statistical Mechanics and its Applications (2024). DOI: 10.1016/j.physa.2024.129909

Provided by

Bar-Ilan University

Citation:

New scaling law demonstrates how AI copes with changing categories (2024, June 20)