As public concern about the ethical and social implications of artificial intelligence keeps growing, it might seem like it’s time to slow down. But inside tech companies themselves, the sentiment is quite the opposite. As Big Tech’s AI race heats up, it would be an “absolutely fatal error in this moment to worry about things that can be fixed later,” a Microsoft executive wrote in an internal email about generative AI, as The New York Times reported.

In other words, it’s time to “move fast and break things,” to quote Mark Zuckerberg’s old motto. Of course, when you break things, you might have to fix them later – at a cost.

In software development, the term “technical debt” refers to the implied cost of making future fixes as a consequence of choosing faster, less careful solutions now. Rushing to market can mean releasing software that isn’t ready, knowing that once it does hit the market, you’ll find out what the bugs are and can hopefully fix them then.

However, negative news stories about generative AI tend not to be about these kinds of bugs. Instead, much of the concern is about AI systems amplifying harmful biases and stereotypes and students using AI deceptively. We hear about privacy concerns, people being fooled by misinformation, labor exploitation and fears about how quickly human jobs may be replaced, to name a few. These problems are not software glitches. Realizing that a technology reinforces oppression or bias is very different from learning that a button on a website doesn’t work.

As a technology ethics educator and researcher, I have thought a lot about these kinds of “bugs.” What’s accruing here is not just technical debt, but ethical debt. Just as technical debt can result from limited testing during the development process, ethical debt results from not considering possible negative consequences or societal harms. And with ethical debt in particular, the people who incur it are rarely the people who pay for it in the end.

Off to the races

As soon as OpenAI’s ChatGPT was released in November 2022, the starter pistol for today’s AI race, I imagined the debt ledger starting to fill.

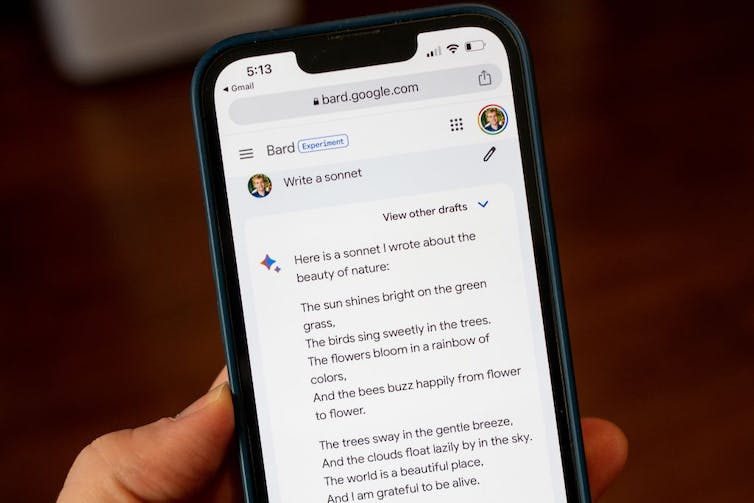

Within months, Google and Microsoft released their own generative AI programs, which seemed rushed to market in an effort to keep up. Google’s stock prices fell when its chatbot Bard confidently supplied a wrong answer during the company’s own demo. One might expect Microsoft to be particularly cautious when it comes to chatbots, considering Tay, its Twitter-based bot that was almost immediately shut down in 2016 after spouting misogynist and white supremacist talking points. Yet early conversations with the AI-powered Bing left some users unsettled, and it has repeated known misinformation.

Not all AI-generated writing is so delightful.

Smith Collection/Gado/Archive Photos via Getty Images

When the social debt of these rushed releases comes due, I…