Some of the most complex cognitive functions are possible because different sides of your brain control them. Chief among them is speech perception, the ability to interpret language. In people, the speech perception process is typically dominated by the left hemisphere.

Your brain breaks apart fleeting streams of acoustic information into parallel channels – linguistic, emotional and musical – and acts as a biological multicore processor. Although scientists have recognized this division of cognitive labor for over 160 years, the mechanisms underpinning it remain poorly understood.

Researchers know that distinct subgroups of neurons must be tuned to different frequencies and timing of sound. In recent decades, studies on animal models, especially in rodents, have confirmed that splitting sound processing across the brain is not uniquely human, opening the door to more closely dissecting how this occurs.

Yet a central puzzle persists: What makes near-identical regions in opposite hemispheres of the brain process different types of information?

Answering that question promises broader insight into how experience sculpts neural circuits during critical periods of early development, and why that process is disrupted in neurodevelopmental disorders.

Timing is everything

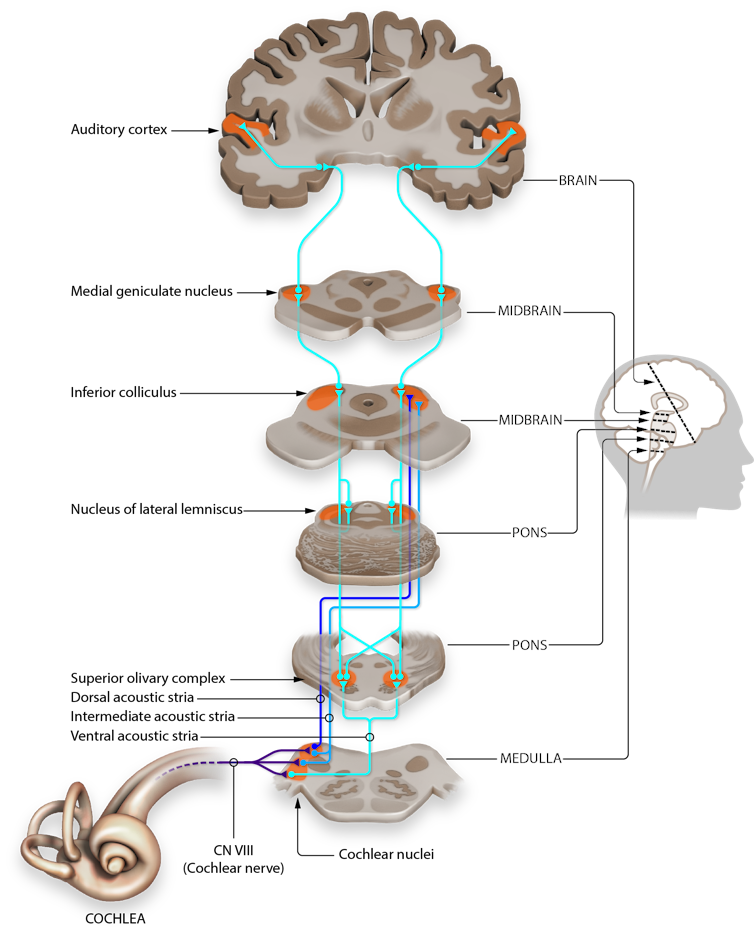

Sensory processing of sounds begins in the cochlea, a part of the inner ear where sound frequencies are converted into electricity and forwarded to the auditory cortex of the brain. Researchers believe that the division of labor across brain hemispheres required to recognize sound patterns begins in this region.

For more than a decade, my work as a neuroscientist has focused on the auditory cortex. My lab has shown that mice process sound differently in the left and right hemispheres of their brains, and we have worked to tease apart the underlying circuitry.

For example, we’ve found the left side of the brain has more focused, specialized connections that may help detect key features of speech, such as distinguishing one word from another. Meanwhile, the right side is more broadly connected, suited for processing melodies and the intonation of speech.

Sound information moves through the cochlea to the brain.

Jonathan E. Peelle, CC BY-SA

We tackled the question of how these left-right differences in hearing develop in our latest work, and our results underscore the adage that timing is everything.

We tracked how neural circuits in the left and right auditory cortex develop from early life to adulthood. To do this, we recorded electrical signals in mouse brains to observe how the auditory cortex matures and to see how sound experiences shape its structure.

Surprisingly, we found that the right hemisphere consistently outpaced the left in development, showing more rapid growth and refinement. This suggests there are critical windows of development – brief periods when the brain is especially adaptive and sensitive…