Is social media designed to reward people for acting badly?

The answer is clearly yes, given that the reward structure on social media platforms relies on popularity, as indicated by the number of responses – likes and comments – a post receives from other users. Black-box algorithms then further amplify the spread of posts that have attracted attention.

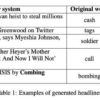

Sharing widely read content, by itself, isn’t a problem. But it becomes a problem when attention-getting, controversial content is prioritized by design. Given the design of social media sites, users form habits to automatically share the most engaging information regardless of its accuracy and potential harm. Offensive statements, attacks on out groups and false news are amplified, and misinformation often spreads further and faster than the truth.

We are two social psychologists and a marketing scholar. Our research, presented at the 2023 Nobel Prize Summit, shows that social media actually has the ability to create user habits to share high-quality content. After a few tweaks to the reward structure of social media platforms, users begin to share information that is accurate and fact-based.

The problem with habit-driven misinformation-sharing is significant. Facebook’s own research shows that being able to share already shared content with a single click drives misinformation. Thirty-eight percent of views of text misinformation and 65% of views of photographic misinformation come from content that has been reshared twice, meaning a share of a share of a share of an original post. The biggest sources of misinformation, such as Steve Bannon’s War Room, exploit social media’s popularity optimization to promote controversy and misinformation beyond their immediate audience.

How social media algorithms drive misinformation.

Re-targeting rewards

To investigate the effect of a new reward structure, we gave financial rewards to some users for sharing accurate content and not sharing misinformation. These financial rewards simulated the positive social feedback, such as likes, that users typically receive when they share content on platforms. In essence, we created a new reward structure based on accuracy instead of attention.

As on popular social media platforms, participants in our research learned what got rewarded by sharing information and observing the outcome, without being explicitly informed of the rewards beforehand. This means that the intervention did not change the users’ goals, just their online experiences. After the change in reward structure, participants shared significantly more content that was accurate. More remarkably, users continued to share accurate content even after we removed rewards for accuracy in a subsequent round of testing. These results show that users can be given incentives to share accurate information as a matter of habit.

A different group of users received…