Neurotechnologies – devices that interact directly with the brain or nervous system – were once dismissed as the stuff of science fiction. Not anymore.

Several companies are trying to develop brain-computer interfaces, or BCIs, in hopes of helping patients with severe paralysis or other neurological disorders. Entrepreneur Elon Musk’s company Neuralink, for example, recently received Food and Drug Administration approval to begin human testing for a tiny brain implant that can communicate with computers. There are also less invasive neurotechnologies, like EEG headsets that sense electrical activity inside the wearer’s brain, covering a wide range of applications from entertainment and wellness to education and the workplace.

Neurotechnology research and patents have soared at least twentyfold over the past two decades, according to a United Nations report, and devices are getting more powerful. Newer BCIs, for example, have the potential to collect brain and nervous system data more directly, with higher resolution, in greater amounts, and in more pervasive ways.

However, these improvements have also raised concerns about mental privacy and human autonomy – questions I think about in my research on the ethical and social implications of brain science and neural engineering. Who owns the generated data, and who should get access? Could this type of device threaten individuals’ ability to make independent decisions?

In July 2023, the U.N. agency for science and culture held a conference on the ethics of neurotechnology, calling for a framework to protect human rights. Some critics have even argued that societies should recognize a new category of human rights, “neurorights.” In 2021, Chile became the first country whose constitution addresses concerns about neurotechnology.

Advances in neurotechnology do raise important privacy concerns. However, I believe these debates can overlook more fundamental threats to privacy.

A glimpse inside

Concerns about neurotechnology and privacy focus on the idea that an observer can “read” a person’s thoughts and feelings just from recordings of their brain activity.

It is true that some neurotechnologies can record brain activity with great specificity: for example, developments on high-density electrode arrays that allow for high-resolution recording from multiple parts of the brain.

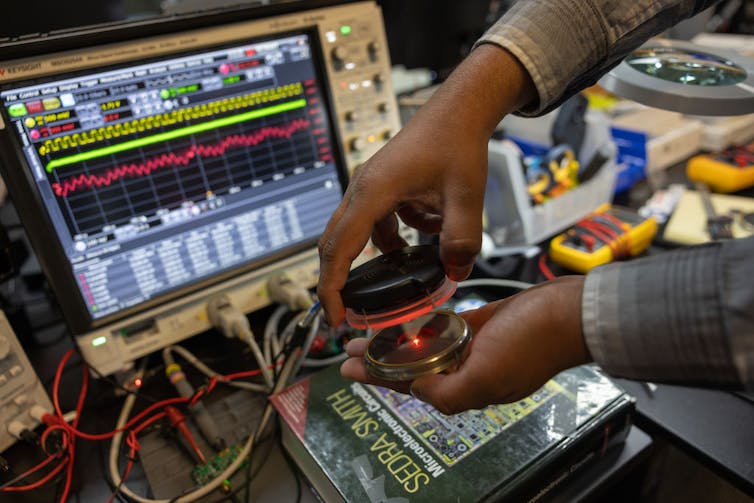

Paradromics, an Austin-based company, is developing a brain-computer interface to aide disabled and nonverbal patients with communication.

Julia Robinson for The Washington Post via Getty Images

Researchers can make inferences about mental phenomena and interpret behavior based on this kind of information. However, “reading” the recorded brain activity is not straightforward. Data has already gone through filters and algorithms before the human eye gets the output.

Given these complexities, my colleague Daniel Susser and I wrote a recent article…