Facebook scientists on Wednesday said they developed artificial intelligence software to not only identify “deepfake” images but to figure out where they came from.

Deepfakes are photos, videos or audio clips altered using artificial intelligence to appear authentic, which experts have warned can mislead or be completely false.

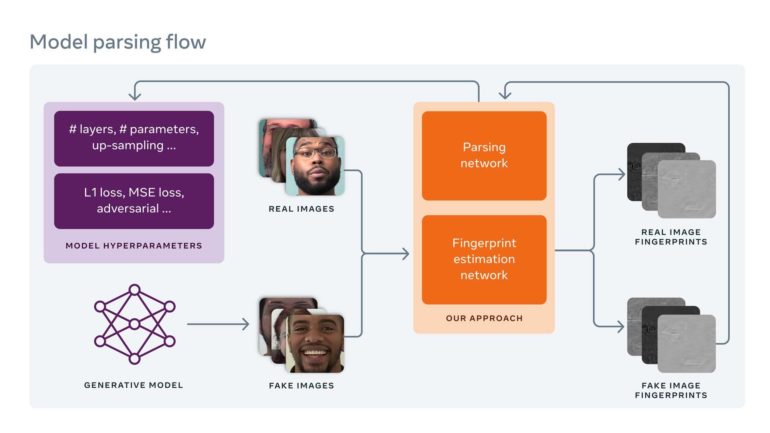

Facebook research scientists Tal Hassner and Xi Yin said their team worked with Michigan State University to create software that reverse engineers deepfake images to figure out how they were made and where they originated.

“Our method will facilitate deepfake detection and tracing in real-world settings, where the deepfake image itself is often the only information detectors have to work with,” the scientists said in a blog post.

“This work will give researchers and practitioners tools to better investigate incidents of coordinated disinformation using deepfakes, as well as open up new directions for future research,” they added.

Facebook’s new software runs deepfakes through a network to search for imperfections left during the manufacturing process, which the scientists say alter an image’s digital “fingerprint.”

“In digital photography, fingerprints are used to identify the digital camera used to produce an image,” the scientists said.

“Similar to device fingerprints, image fingerprints are unique patterns left on images… that can equally be used to identify the generative model that the image came from.”

“Our research pushes the boundaries of understanding in deepfake detection,” they said.

Microsoft late last year unveiled software that can help spot deepfake photos or videos, adding to an arsenal of programs designed to fight the hard-to-detect images ahead of the US presidential election.

The company’s Video Authenticator software analyzes an image or each frame of a video, looking for evidence of manipulation that could be invisible to the naked eye.

Microsoft unveils ‘deepfake’ detector ahead of US vote

More information:

ai.facebook.com/blog/reverse-e … ngle-deepfake-image/

2021 AFP

Citation:

Facebook AI software able to dig up origins of deepfake images (2021, June 17)

retrieved 17 June 2021

from https://techxplore.com/news/2021-06-facebook-ai-software-deepfake-images.html

This document is subject to copyright. Apart from any fair dealing for the purpose of private study or research, no

part may be reproduced without the written permission. The content is provided for information purposes only.