Quantum computers hold enormous promise in our big data world. If researchers can harness their potential, these devices could perform massively complex computations at lightning speed.

Classical computers such as our laptops store information in bits, which exist in one of two physical states: 0 or 1. But qubits, the equivalent form of data storage for quantum computers, work differently because their nature is probabilistic rather than deterministic. They can exist as both 0 and 1 simultaneously, which is what gives them their power. As the number of qubits stored in a quantum computer increases, that computer can process information exponentially faster than a classical computer.

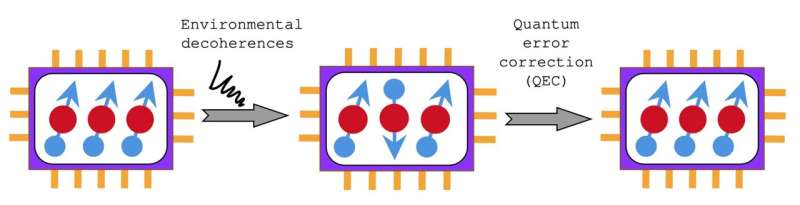

But there is a downside. Qubits are fragile. Their states change very quickly, for example in response to environmental factors such as temperature, introducing a lot of errors. Researchers have struggled to develop an efficient way to correct these errors in real-time. The methods to correct such quantum errors are known as quantum error correction (QEC) schemes.

“For quantum computing, these errors are really an issue,” says Dr. Sangkha Borah, a postdoctoral researcher in the Quantum Machines Unit led by Professor Jason Twamley at the Okinawa Institute of Science and Technology (OIST). “If we can figure out how to accurately perform QEC, we might have usable quantum computers very soon.”

Now, Dr. Borah and his colleagues at OIST, and their collaborators at Trinity College in Dublin, Ireland, and the University of Queensland in Brisbane, Australia, have proposed a new error correction technique, which has recently been published in Physical Review Research.

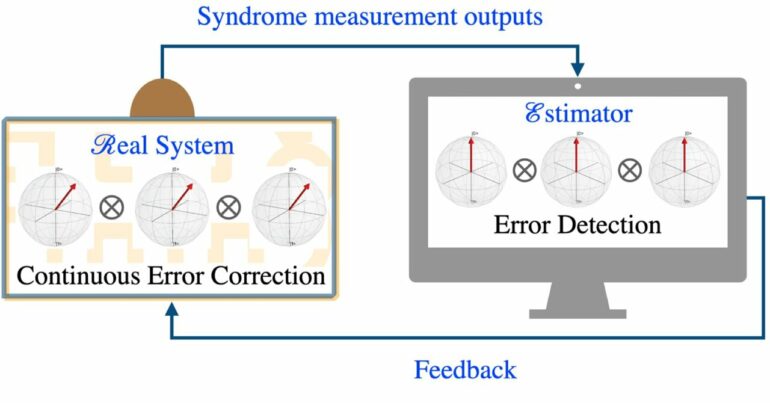

This schematic shows how the MBE-CQEC scheme works for three qubits. Qubits in a quantum computer (left) are continuously measured by an estimator (right), which is run by a classical computer. The estimator detects errors by making syndrome measurements, then corrects them with appropriate feedback. © Sangkha Borah, OIST

Achieving QEC involves making a collection of multiple qubits using a quantum mechanical property called entanglement. To detect errors happening in the qubits, a QEC scheme must apply a series of measurements known as syndrome measurements. These measurements assess whether two nearest neighbor qubits are aligned in the same direction or not. The results of these measurements are called syndromes, and based them, the error in the qubits can be detected and subsequently corrected.

Commonly used QEC schemes are usually slow, and they also result in a rapid loss of information stored in the qubits due to errors they fail to catch and correct in real time. Additionally, such QEC methods employ a conventional quantum measurement approach called projective measurement to get the syndromes. This approach requires several additional qubits, making it resource-intensive.

Instead, Dr. Borah and his colleagues used an approach called continuous measurement. Such measurements can be carried out much more rapidly than conventional projective measurements in a highly resource-efficient way. They developed a QEC scheme called measurement-based estimator scheme for continuous quantum error correction (MBE-CQEC), which could quickly and efficiently detect and correct errors from partial, noisy syndrome measurements. They set up a powerful classical computer to act as an outside controller (or estimator) that estimates errors in the quantum system, filters out the noise perfectly, and applies feedback to correct them.

The new QEC scheme is based on a theoretical model that still needs to be validated experimentally on a quantum computer, Dr. Borah explains. Also, it has an important limitation: As the number of qubits in the system increases, real-time simulation of the estimator becomes exponentially slower.

“We are working on it, and we hope others in the field will also take up the problem,” Dr. Borah concluded.

More information:

Sangkha Borah et al, Measurement-based estimator scheme for continuous quantum error correction, Physical Review Research (2022). DOI: 10.1103/PhysRevResearch.4.033207

Provided by

Okinawa Institute of Science and Technology

Citation:

MBE-CQEC: A new scheme to correct quantum errors (2022, September 15)