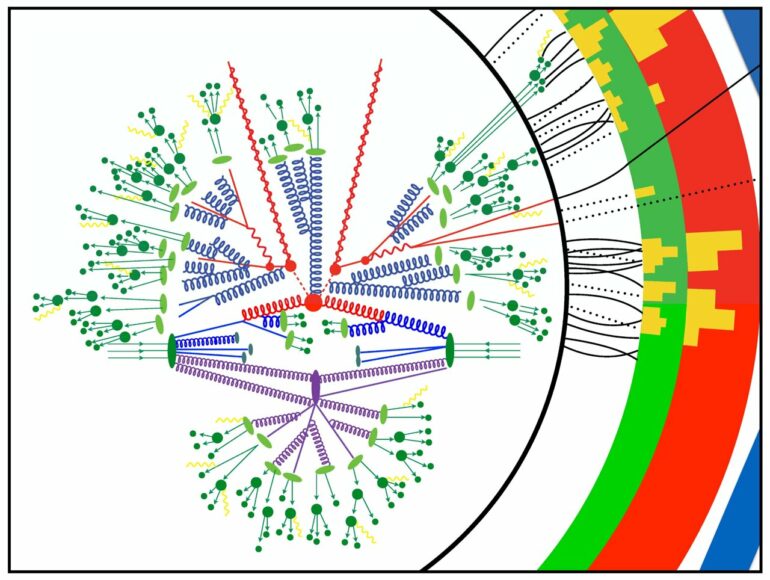

Lawrence Berkeley National Laboratory physicists Christian Bauer, Marat Freytsis and Benjamin Nachman have leveraged an IBM Q quantum computer through the Oak Ridge Leadership Computing Facility’s Quantum Computing User Program to capture part of a calculation of two protons colliding. The calculation can show the probability that an outgoing particle will emit additional particles.

In the team’s recent paper, published in Physical Review Letters, the researchers describe how they used a method called effective field theory to break down their full theory into components. Ultimately, they developed a quantum algorithm to allow the computation of some of these components on a quantum computer while leaving other computations for classical computers.

“For a theory that’s close to nature, we showed how this would work in principle. Then we took a very simplified version of that theory and did an explicit calculation on a quantum computer,” Nachman said.

The Berkeley Lab team aims to uncover insights about the smallest building blocks of nature by observing high-energy particle collisions in laboratory environments, such as the Large Hadron Collider in Geneva, Switzerland. The team is exploring what happens in these collisions by using calculations to compare predictions with the actual collision debris.

“One of the difficulties of these kinds of calculations is that we want to describe a large range of energies,” Nachman said. “We want to describe the highest-energy processes down to the lowest-energy processes by analyzing the corresponding particles that fly into our detector.”

Using a quantum computer alone to solve these kinds of calculations requires a number of qubits that is well beyond the quantum compute resources available today. The team can calculate these problems on classical systems using approximations, but these ignore important quantum effects. Therefore, the team aimed to separate the calculation into different chunks that were either well-suited for classical systems or quantum computers.

The team ran experiments on the IBM Q through the OLCF’s QCUP program at the U.S. Department of Energy’s Oak Ridge National Laboratory to verify that the quantum algorithms they developed reproduced the expected results at a small scale that can still be computed and confirmed with classical computers.

“This is an absolutely critical demonstration problem,” Nachman said. “For us, it’s important that we describe these particles’ properties theoretically and then actually implement a version of them on a quantum computer. A lot of challenges that arise when you run on a quantum computer don’t happen theoretically. Our algorithm scales, so when we get more quantum resources, we will be able to make calculations that we couldn’t make classically.”

The team also aims to make quantum computers usable so that they can perform the kinds of science they hope to do. Quantum computers are noisy, and this noise introduces errors into the calculations. Therefore, the team also deployed error mitigation techniques that they had developed in previous work.

Next, the team hopes to add more dimensions to their problem, break their space up into a smaller number of points and scale up the size of their problem. Eventually, they hope to make calculations on a quantum computer that are not possible with classical computers.

“The quantum computers that are available through ORNL’s IBM Q agreement have around 100 qubits, so we should be able to scale up to bigger system sizes,” Nachman said.

The researchers also hope to relax their approximations and move to physics problems that are closer to nature so that they can perform calculations that are more than proof of concept.

More information:

Christian W. Bauer et al, Simulating Collider Physics on Quantum Computers Using Effective Field Theories, Physical Review Letters (2021). DOI: 10.1103/PhysRevLett.127.212001

Provided by

Oak Ridge National Laboratory

Citation:

Team simulates collider physics on quantum computer (2022, April 13)