Machine learning (ML) has been used with impressive success in numerous fields—facial recognition, speech recognition, consumer behavior, and drug discovery. One area where it’s had only limited success, though, is as a tool for developing bulk metallic glass.

A team of researchers led by Prof. Jan Schroers set out to figure out why this is, and how they can create ML models that make better glass-forming predictions. Their results are published in Acta Materialia.

Metallic glasses promise a broad range of applications, as they have the strength of the best metals, but the pliability of plastic. However, finding the right elements to make metallic glasses has proven a time-consuming task. Metallic glasses owe their properties to their unique atomic structures: when metallic glasses cool from a liquid to a solid, their atoms settle into a random arrangement and do not crystallize the way traditional metals do. But the glass-forming ability (GFA)—that is, how easy a metal or alloy can be turned into a glass—is complex and poorly understood.

Some types of materials discovery involve relatively few atoms, and ML models have revealed numerous examples of accurate predictions at low cost, and further led to the discovery of materials at unconventional chemical compositions at an accelerated speed.

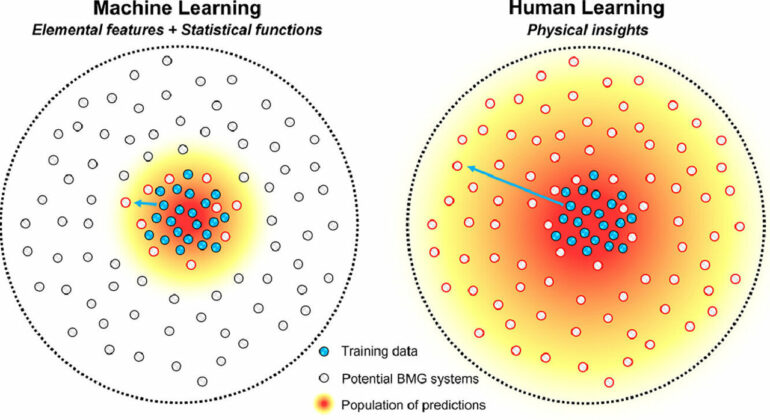

Predicting the glass-forming ability of an alloy, though, is a much more complex problem. Despite hopes that ML could be useful to address such complex problems, it has so far performed significantly worse than human-learning based models.

To test its efficacy, the researchers attempted to predict bulk metallic glass formation using ML. Specifically, they used a recently developed ML model based on 201 alloy features constructed from the combinations of 31 elemental features. They compared its performance to a model developed by Guannan Liu, lead author of the study and a Ph.D. student in Schroers’ lab. This model used only nonphysical features. Surprisingly, its results were no less accurate than ML models based on physical features.

What they found was that they needed to include more physical insights into the model. That is, it’s not enough to simply know the properties of the materials involved, but the model also must include how those properties relate to each other. For instance, including such insights as the ratio of the smallest to the largest element in an alloy could improve the results significantly.

“Even if we provide very little physical insights in constructing the machine learning model, the outcome is dramatically better,” said Schroers, professor of mechanical engineering & materials science. “There has to be a little bit of human learning with the machine learning, otherwise the predictions of ML are essentially useless.”

The properties by themselves don’t lend enough information. Schroers compares it to analyzing a work of literature.

“If you read Shakespeare and say ‘Oh, he uses a lot of the letter P and also the letter S,’ that doesn’t describe Shakespeare,” he said. “But how did Shakespeare put them together? That’s the missing part. Even knowing just a little bit how he puts the letters together makes the predictions significantly more powerful (in identifying and imitating Shakespeare) than just the letters themselves.”

Liu said that to build off their findings, the researchers want to train a machine learning model with more physical insights.

More information:

Guannan Liu et al, Machine learning versus human learning in predicting glass-forming ability of metallic glasses, Acta Materialia (2022). DOI: 10.1016/j.actamat.2022.118497

Citation:

For glass discovery, machine learning needs human help (2023, January 18)