Researchers at the Department of Energy’s SLAC National Accelerator Laboratory have demonstrated a new approach to peer deeper into the complex behavior of materials. The team harnessed the power of machine learning to interpret coherent excitations, collective swinging of atomic spins within a system.

This groundbreaking research, published recently in Nature Communications, could make experiments more efficient, providing real-time guidance to researchers during data collection, and is part of a project led by Howard University including researchers at SLAC and Northeastern University to use machine learning to accelerate research in materials.

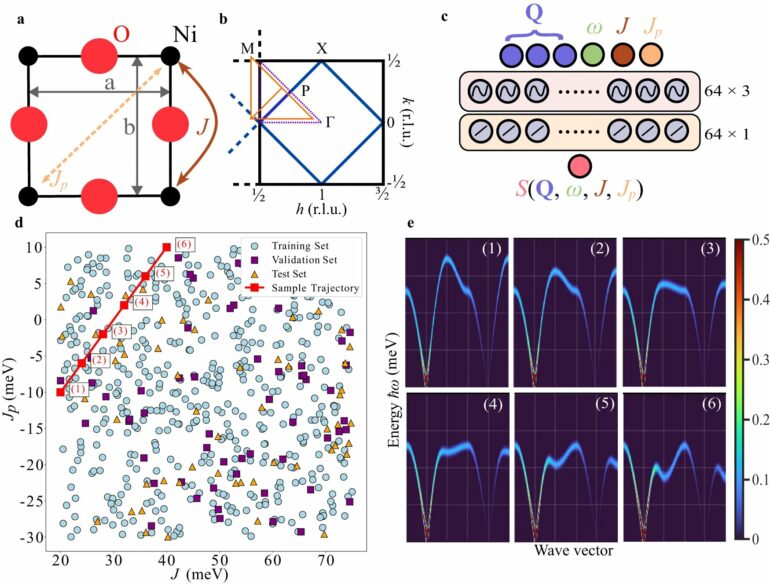

The team created this new data-driven tool using “neural implicit representations,” a machine learning development used in computer vision and across different scientific fields such as medical imaging, particle physics and cryo-electron microscopy. This tool can swiftly and accurately derive unknown parameters from experimental data, automating a procedure that, until now, required significant human intervention.

Peculiar behaviors

Collective excitations help scientists understand the rules of systems, such as magnetic materials, with many parts. When seen at the smallest scales, certain materials show peculiar behaviors, like tiny changes in the patterns of atomic spins. These properties are key for many new technologies, such as advanced spintronics devices that could change how we transfer and store data.

To study collective excitations, scientists use techniques such as inelastic neutron or X-ray scattering. However, these methods are not only intricate, but also resource-intensive given, for example, the limited availability of neutron sources.

Machine learning offers a way to address these challenges, although even then there are limitations. Past experiments used machine learning techniques to enhance the accuracy of X-ray and neutron scattering data interpretation. These efforts relied on traditional image-based data representations. But the team’s new approach, using neural implicit representations, takes a different route.

Neural implicit representations use coordinates, like points on a map, as inputs. In image processing, these networks can predict the color of a particular pixel based on its position. The method doesn’t directly store the image but creates a recipe for how to interpret it by connecting the pixel coordinate to its color. This allows it to make detailed predictions, even between pixels. Such models have proven effective in capturing intricate details in images and scenes, making them promising for analyzing quantum materials data.

“Our motivation was to understand the underlying physics of the sample we were studying. While neutron scattering can provide invaluable insights, it requires sifting through massive data sets, of which only a fraction is pertinent,” said co-author Alexander Petschm, a postdoctoral research associate at SLAC’s Linac Coherent Light Source (LCLS) and Stanford Institute for Materials and Energy Sciences (SIMES).

“By simulating thousands of potential results, we constructed a machine learning model trained to discern nuanced differences in data curves that are virtually indistinguishable to the human eye.”

Pieces falling into place

The team wanted to see if they could make measurements at LCLS, feed them into a machine learning algorithm, and recover the microscopic details of the material as they measured. They did thousands of simulations on what they would measure, with a range of parameters, and fed them all into an algorithm to learn from all the different spectra so they could predict the answers from theory as soon as they measured real spectra.

While waiting to carry out this experiment at the LCLS, it turned out, the measurements they really wanted to make were very similar to inelastic neutron scattering. Petsch realized that neutron scattering data from his thesis aligned perfectly with the team’s simulations, led by Zhurun (Judy) Ji, a Stanford University Science Fellow. When the team applied their machine learning model to this real-world data, it was able to overcome challenges, such as noise and missing data points.

Traditionally, researchers rely on intuition, simulations, and post-experiment analysis to guide their next steps. The team demonstrated how their approach could continuously analyze data in real time. This showed the potential for researchers to determine when they’ve gathered enough data to end an experiment, further streamlining the process. One of the most exciting developments is the potential of this approach for continuous real-time analysis, providing insights into when sufficient data is obtained to conclude an experiment.

“Our machine learning model, trained before the experiment even begins, can rapidly guide the experimental process,” said SLAC scientist Josh Turner, who oversaw the research. “It could change the way experiments are conducted at facilities like LCLS.”

Opening up new avenues

The model’s design isn’t exclusive to neutron scattering. Named the “coordinate network,” it’s adaptable across various scattering measurements which involve data as a function of energy and momentum.

“Machine learning and artificial intelligence are influencing many different areas of science,” said co-author Sathya Chitturi, a Ph.D. student at Stanford University. “Applying new cutting-edge machine learning methods to physics research can enable us to make faster advancements and streamline experiments. It’s exciting to consider what we can tackle next based on these foundations. It opens up many new potential avenues of research.”

More information:

Sathya R. Chitturi et al, Capturing dynamical correlations using implicit neural representations, Nature Communications (2023). DOI: 10.1038/s41467-023-41378-4

Provided by

SLAC National Accelerator Laboratory

Citation:

New AI-driven tool streamlines experiments (2023, October 12)