November 17, 2020

feature

When developing robotic systems and computational tools, computer scientists often draw inspiration from animals or other biological systems. Depending on a system’s unique characteristics and purpose, in fact, nature typically offers specific examples of how it could achieve its goals rapidly and effectively.

Researchers at Shanghai Jiaotong University have recently developed a new bio-inspired and computer vision-based obstacle avoidance system that could improve the navigation of flying robots operating in dynamic environments. This system, presented in a paper pre-published on arXiv, is inspired by how owls detect and avoid objects or other animals in their surroundings.

“Although owls are unable to move their eyes in any direction (similarly to stereo cameras), they have a very flexible neck that can swivel up to 270 degrees, which enables them to rapidly observe even behind without relocating their torso,” the researchers write in their paper.

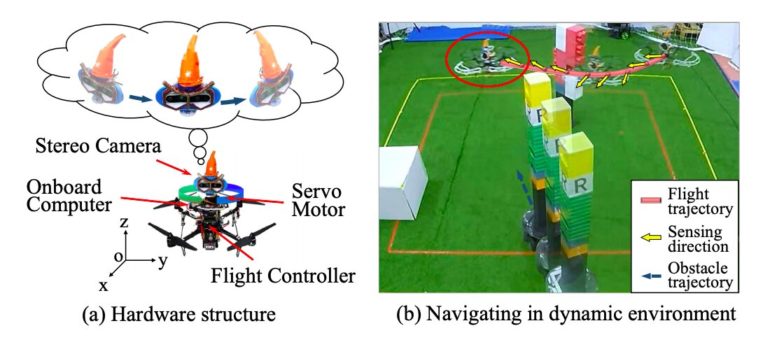

To replicate the way in which owls move their eyes in different directions and detect both static and moving objects around them, the researchers mounted a servo motor and a stereo camera on a quadrotor (i.e., an unmanned flying robot with four rotors). In their design, the servo motor acts as a neck and the stereo camera as a head. Due to the light weight of the stereo camera, it can move much faster than the robot’s body and its movements barely affect the quality of the robot’s movements or the direction it is flying in.

The system uses a sensor-planning algorithm to estimate how much the robot would benefit from sensing objects in different directions and plans the angle at which its “head” (i.e., the stereo camera) should rotate accordingly. Thus, the quadrotor continuously and actively senses its surroundings, identifying obstacles impeding its way rapidly.

In addition, the system tracks and predicts the trajectories of moving obstacles in its vicinity, adapting its movements to changes in the surrounding environment. Finally, based on the data collected by the stereo camera, the system uses a sampling-based path planner to plan a collision-free path, outlining the movements that would allow the robot to reach a specific location or complete a mission without colliding with other objects and being damaged.

“Altogether, this system is called an active sense and avoid (ASAA) system,” the researchers explain in their paper. “As far as we know, this is the first system that applies active stereo vision to realize obstacle avoidance for flying robots.”

The researchers at Shanghai Jiaotong University evaluated their ASAAA system in a series of experiments conducted in real environments. In these experiments, a quadrotor either had to reach a desired location avoiding all obstacles in its way or had to monitor and catch an artificial rat. The results of these tests are very promising, as the robot performed well on both tasks, rapidly adapting to abrupt changes in its environment and avoiding collisions with both static and moving obstacles.

Moreover, the prototype fabricated by the researchers employs a single stereo camera; thus, it is relatively inexpensive. This may make it easier to manufacture and implement on a large scale.

In the future, this system could be used to carry out missions in a wide range of environments, ranging from urban areas to natural environments largely populated by wildlife. The system could also inspire the development of other flying robots with enhanced obstacle avoidance capabilities based on similar designs. In their next studies, the researchers will try to create systems that replicate the behavior of other animals, while also using reinforcement learning techniques to improve their system’s sensing performance further.

A system to improve a robot’s indoor navigation

More information:

Chen et al., Bio-inspired obstacle avoidance for flying robots with active sensing. arXiv:2010.04977 [cs.RO]. arxiv.org/abs/2010.04977

2020 Science X Network

Citation:

An obstacle avoidance system for flying robots inspired by owls (2020, November 17)

retrieved 18 November 2020

from https://techxplore.com/news/2020-11-obstacle-robots-owls.html

This document is subject to copyright. Apart from any fair dealing for the purpose of private study or research, no

part may be reproduced without the written permission. The content is provided for information purposes only.